Autonomous Database (ADB) learning keeping myself pretty occupied these days. All credit should go to Oracle for their free offering of Oracle cloud technology university courses and a chance to certify. So planned to write a few important topics along the way while learning about ADB. This blog post will primarly focus on autonomous database (ADB) service available connectivity options, downloading database credentials and a procedure to connect to an ADB database service using the SQL * Plus tool.

An Autonomous Database (ADB) service can be connected through SQL * Net, JDBC thick/thin, ODBC processes etc. ADB connection uses certificate authentication and SSL, where certificate authentication uses encrypted key stored in a wallet files on both client and server. All client connections to the database must use SSL for encryption and under any circumstances it doesn't accept any unsecured connections.

To begin with, every ADB database connection must be made over a public internet and application should use a secure connection (SSL). Before establishing any sort of client connectivity to the ADB service, service client connect credentials must be downloaded from the OCI page for the respective database (instance) or region. Below procedure summarizes how to download database connect (wallet) credentials and use of SQL * Plus to connect to ADB.

Downloading database connection credentials

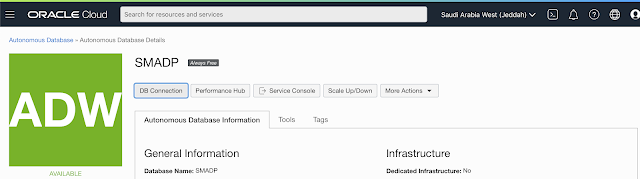

As discussed earlier, ADB only accepts secure connections to an Autonomous Database. Therefore, Oracle client credential (wallet) files which contains your connection credentials should be downloaded first. You need to download this files only once. The wallet can be downloaded either from Administration link from the ADB service console page, or from the DB Connection button on instance page of OCI page or through API calls. Use the following method to download the connection credentials from OCI console:

- Navigate to ADB details page and click on DB Connection button

- Based on the connectivity requirement, choose either Instance or Regional wallet type and click on the Download button

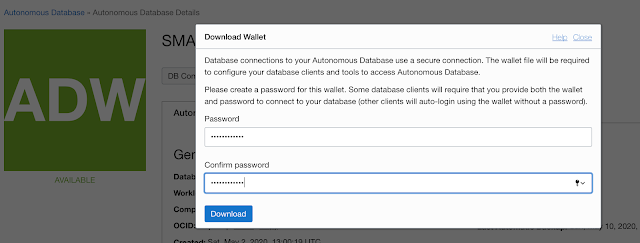

- The credentials file is protected with a password. So, you will have to input a password before downloading the file. The password must be 8 characters long, with at least 1 letter and either 1 numeric character or 1 special character

- Once the password is entered and confirmed, click the download button

- Default name for the file is Wallet_databaename.zip, and the zip files contains following files:

- tnsnames.ora & sqlnet.ora

- cwallet.sso & ewallet.p12

- keystore.jks & tuststore.jks

- ojdbc.properties &

- Unzip the file to a secure location

Predefined DB Service Names

The tnsnames.ora files contains the following predefined 5 database services names for Autonomous Transaction Processing (ATP) and 3 (high, medium, low) for Autonomous Data warehouse (ADW) databases:

- high A high priority app connection for reporting and batch operations which runs in parallel with little concurrency capacity, subject to queuing.

- medium A typical connection service for reporting and batch operations with limited (4) parallel degree.

- low A low priority connection service for reporting and batch operations with no parallelism options.

- tp (for ATP only), typical application connection service for transaction processing operations with no parallelism.

- tpurgent (ATP only) the highest priority application connection for time critical transaction processing operations. Can specify manual parallelism.

By default, sessions on ADB that are idle for over 5 minutes will be automatically disconnected from the database to make room for more active sessions.

SQL * Plus connection to ADB

For any sort of ADB connection, database credentials must be dowloaded by an individual user first. In this segment, we will discuss how to use an SQL * Plus tool to connect to ADB to execute regular SQL commands. Unlike other Oracle cloud options, ADB doesn't provide compute node access. So, you must have SQL * Plus installed on your computer. So, you have to install Oracle client software 11.2.0.4 or higher version on your system. Once the client software is installed, download connection credentials using the procedure described above.

Unzip/uncompress the zip file downloaded into a secure folder on your computer.

Edit the sqlnet.ora file and replace ?/network/admin text with name of the folder containing the credentials, as shown in the below example on a unix/linux client:

WALLET_LOCATION = (SOURCE = (METHOD = file) (METHOD_DATA = (DIRECTORY="/home/adw_credentials")))

SSL_SERVER_DN_MATCH=yes

It is highly recommended to set TNS_ADMIN environmental variable and set the location of the credentials files.

As previously stated, the connection must be through an internet. So the companies that doesn't provide direct internet connection, they will allow the access through HTTP proxy settings. In this case, you must update the sqlnet.ora and tnsnames.ora files as per below example if you have a firewall connection and requires HTTP proxy to connect internet (this scenario only support clients with 12.2.0.1 or higher version):

- sqlnet.ora should have this entry: SQLNET.USE_HTTPS_PROXY=on

- And the tnsnames.ora entry should contains the following:

ADWC1_high =

(description=

(address=

(https_proxy=proxyhostname)(https_proxy_port=80)(protocol=tcps)(port=1522)(host=adwc.example.oraclecloud.com)

)

(connect_data=(service_name=adwc1_high.adwc.oraclecloud.com)

)

(security=(ssl_server_cert_dn="adwc.example.oraclecloud.com,OU=Oracle BMCS US,O=Oracle Corporation,L=Redwood City,ST=California,C=US")

)

)

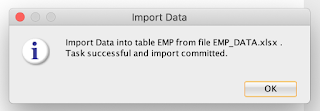

Once you have the client software and connect credentials ready, now you can use the sql * plus tool to connect to a ADB. Refer below syntax using low credentials service name here:

sqlplus sales_dwh@adwc1_low

SQL*Plus: Release 18.0.0.0.0 - Production on Wed Apr 3 15:09:53 2019

Version 18.5.0.0.0

Copyright (c) 1982, 2018, Oracle. All rights reserved.

Enter password:

Last Successful login time: Wed Apr 03 2019 14:50:39 -07:00

Connected to:

Oracle Database 18c Enterprise Edition Release 18.0.0.0.0 - Production

Version 18.4.0.0.0

SQL>

You can also see the last successful login time.

In nutshell, this post explained how to download ADB database connect credentials from various methods, how to connect to ADB using SQL * Plus tool. Will be discussing about JDBC thin client connectivity procedure in the next post.

References;

https://docs.oracle.com/en/cloud/paas/autonomous-data-warehouse-cloud/user/connect-sqlplus.html#GUID-A3005A6E-9ECF-40CB-8EFC-D1CFF664EC5A